What trends can you find in your test suites?

How much do you know about the trends in your test suites? Whether you are tracking it or not some trends exist between runs. In a perfect test environment, the tests and the CI are perfectly deterministic and all code is equally easy to test. Unfortunately, most test suites are the best we have in the limited time to build them. They have some degree of non-determinism or randomness, and test areas that trip up developers much more often than others.

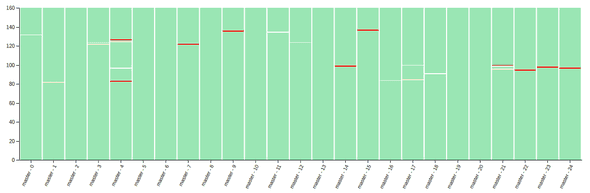

Can you spot the trend below?

Finding trends

These types of non-deterministic challenges show up as trends over time, and this is hard to see in the default view of test results. By default we view results of running tests in isolate, just the test results we ran independant of all other time we ran those tests. With all the analytics and data platforms connected to what happens to code in production, this often leaves teams relying on memory and intuition for the test code.

What would you find?

What would you find if you had all the data from your test suites? Would you notice some tests that fail more often than others? Would you be able to more quickly find what caused a test to become unreliable? or patterns that make tests unreliable in your test suite?

Let us know if you have any ideas on what you would find!